Hi everyone, inside this article we will see the concept about AVL Trees.

AVL trees are a type of self-balancing binary search tree in data structures and algorithms. They were named after their inventors, Adelson-Velsky and Landis.

In an AVL tree, the height of the left and right subtrees of any node differs by at most one. This property helps to ensure that the tree remains balanced, with a worst-case time complexity of O(log n) for operations like searching, insertion, and deletion.

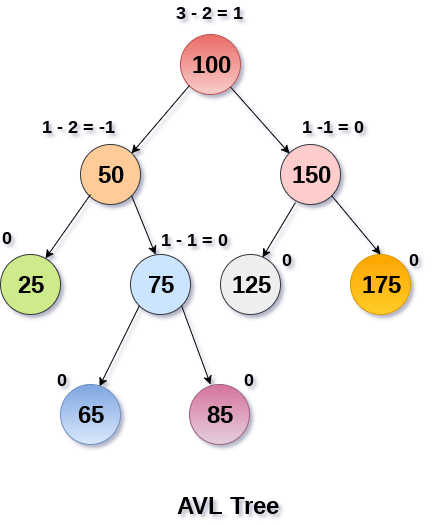

Balance Factor in AVL Trees

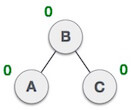

In AVL trees, the balance factor of a node is a measure of how balanced the tree is at that node. The balance factor is defined as the difference between the height of the left subtree and the height of the right subtree.

More formally, the balance factor of a node N is defined as:

balanceFactor(N) = height(leftSubtree(N)) - height(rightSubtree(N))If the balance factor of a node is 0, then the left and right subtrees have equal height, and the tree is considered balanced at that node. If the balance factor is positive, then the left subtree is taller than the right subtree, and the node is considered “left-heavy”. If the balance factor is negative, then the right subtree is taller than the left subtree, and the node is considered “right-heavy”.

In an AVL tree, every node must have a balance factor of either 0, 1, or -1. If a node has a balance factor that is greater than 1 or less than -1, then the tree is considered unbalanced at that node, and an AVL rotation is required to restore balance to the tree.

Types of Operations on AVL Tree

Here are the types of operations on AVL trees:

| Operation | Description | Time Complexity |

|---|---|---|

| Search | Find a node with a given key in the AVL tree | O(log n) |

| Insertion | Insert a new node with a given key into the AVL tree | O(log n) |

| Deletion | Remove a node with a given key from the AVL tree | O(log n) |

| Traversal | Visit each node in the AVL tree in a specified order | O(n) |

Note that the time complexity of all AVL tree operations is O(log n) for search, insertion, and deletion, and O(n) for traversal. This is because AVL trees are self-balancing, and therefore maintain a height that is proportional to log(n). This ensures that search, insertion, and deletion operations can be performed efficiently in worst-case time complexity.

In comparison to other self-balancing binary search trees, such as red-black trees, AVL trees have a slightly higher overhead due to the need to perform rotations to maintain balance. However, this overhead is generally small and is offset by the more predictable and consistent performance of AVL trees, especially in applications where there are more search and retrieval operations than insertions or deletions.

Here is the pseudocode for AVL tree operations:

AVL Tree Node Structure:

struct Node {

int key;

int height;

Node* left;

Node* right;

};

AVL Tree Height Calculation:

int height(Node* node) {

if (node == NULL) {

return 0;

}

return node->height;

}AVL Tree Right Rotation:

Node* rightRotate(Node* y) {

Node* x = y->left;

Node* T2 = x->right;

x->right = y;

y->left = T2;

y->height = max(height(y->left), height(y->right)) + 1;

x->height = max(height(x->left), height(x->right)) + 1;

return x;

}

AVL Tree Left Rotation:

Node* leftRotate(Node* x) {

Node* y = x->right;

Node* T2 = y->left;

y->left = x;

x->right = T2;

x->height = max(height(x->left), height(x->right)) + 1;

y->height = max(height(y->left), height(y->right)) + 1;

return y;

}AVL Tree Get Balance Factor:

int getBalance(Node* node) {

if (node == NULL) {

return 0;

}

return height(node->left) - height(node->right);

}

AVL Tree Insertion:

Node* insert(Node* node, int key) {

if (node == NULL) {

Node* newNode = new Node;

newNode->key = key;

newNode->height = 1;

newNode->left = NULL;

newNode->right = NULL;

return newNode;

}

if (key < node->key) {

node->left = insert(node->left, key);

} else if (key > node->key) {

node->right = insert(node->right, key);

} else {

return node;

}

node->height = max(height(node->left), height(node->right)) + 1;

int balance = getBalance(node);

if (balance > 1 && key < node->left->key) {

return rightRotate(node);

}

if (balance < -1 && key > node->right->key) {

return leftRotate(node);

}

if (balance > 1 && key > node->left->key) {

node->left = leftRotate(node->left);

return rightRotate(node);

}

if (balance < -1 && key < node->right->key) {

node->right = rightRotate(node->right);

return leftRotate(node);

}

return node;

}

Complexity Analysis

Here is the complexity table of AVL tree operations:

| Operation | Time Complexity |

|---|---|

| Search | O(log n) |

| Insertion | O(log n) |

| Deletion | O(log n) |

Note that the time complexity of all AVL tree operations is O(log n), where n is the number of nodes in the tree. This is because AVL trees are self-balancing, and therefore maintain a height that is proportional to log(n). This ensures that search, insertion, and deletion operations can be performed efficiently in worst-case time complexity.

Why we use AVL Tree?

AVL trees are an important data structure in computer science and are used in a wide range of applications because of their efficient performance for search, insertion, and deletion operations. Here are some reasons why we need to use AVL trees:

- Guaranteed logarithmic time complexity: AVL trees are self-balancing, and therefore guarantee a logarithmic time complexity for search, insertion, and deletion operations. This makes them ideal for applications where fast retrieval of data is important, such as in database management systems, compilers, and web search engines.

- Efficient memory usage: AVL trees are space-efficient because they do not store any additional information beyond the key and pointers to the left and right subtrees. This makes them ideal for applications where memory usage is a concern, such as in embedded systems or mobile devices.

- Versatile: AVL trees can be used to store any type of data that can be sorted, including integers, floating-point numbers, strings, and complex objects.

- Maintains order: AVL trees maintain the order of the data in the tree, which can be important for certain applications, such as maintaining a sorted list of data.

- Easy to implement: AVL trees are easy to implement and maintain, and are a popular choice for data storage in programming languages such as C++ and Java.

In summary, AVL trees are an efficient and versatile data structure that can be used in a wide range of applications, and offer guaranteed logarithmic time complexity for search, insertion, and deletion operations.

AVL Rotations

AVL rotations are a technique used to maintain the balance of an AVL tree during insertion and deletion operations.

Here are the four types of AVL rotations:

| Rotation Type | Description |

|---|---|

| Left-Left | Occurs when a node is inserted into the left subtree of the left child of a node, causing the left subtree to be taller than the right subtree by 2. In this case, a single right rotation is performed to rebalance the subtree. |

| Left-Right | Occurs when a node is inserted into the right subtree of the left child of a node, causing the left subtree to be shorter than the right subtree by 2. In this case, a left rotation is performed on the left child, followed by a right rotation on the unbalanced node to rebalance the subtree. |

| Right-Left | Occurs when a node is inserted into the left subtree of the right child of a node, causing the right subtree to be shorter than the left subtree by 2. In this case, a right rotation is performed on the right child, followed by a left rotation on the unbalanced node to rebalance the subtree. |

| Right-Right | Occurs when a node is inserted into the right subtree of the right child of a node, causing the right subtree to be taller than the left subtree by 2. In this case, a single left rotation is performed to rebalance the subtree. |

Note that in each of these rotations, the subtree rooted at the unbalanced node is rebalanced by performing a series of left and right rotations. These rotations ensure that the AVL tree maintains its balance property, which guarantees logarithmic time complexity for search, insertion, and deletion operations.

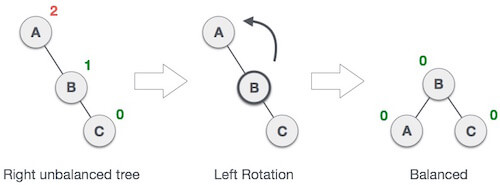

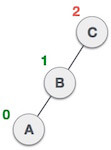

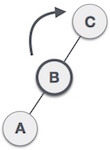

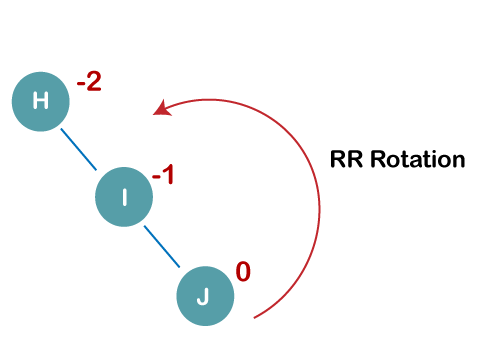

RR Rotation

When BST becomes unbalanced, due to a node is inserted into the right subtree of the right subtree of A, then we perform RR rotation, RR rotation is an anticlockwise rotation, which is applied on the edge below a node having balance factor -2

In above example, node A has balance factor -2 because a node C is inserted in the right subtree of A right subtree. We perform the RR rotation on the edge below A.

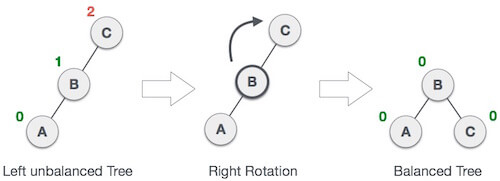

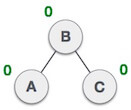

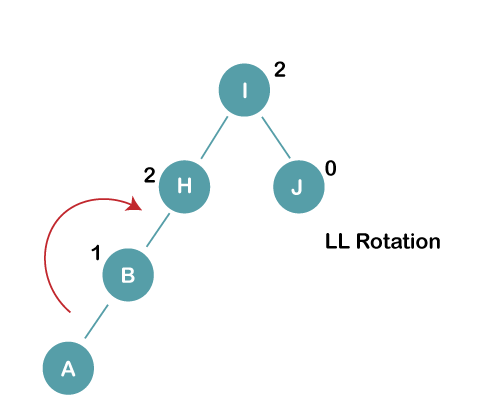

LL Rotation

When BST becomes unbalanced, due to a node is inserted into the left subtree of the left subtree of C, then we perform LL rotation, LL rotation is clockwise rotation, which is applied on the edge below a node having balance factor 2.

In above example, node C has balance factor 2 because a node A is inserted in the left subtree of C left subtree. We perform the LL rotation on the edge below A.

LR Rotation

Double rotations are bit tougher than single rotation which has already explained above. LR rotation = RR rotation + LL rotation, i.e., first RR rotation is performed on subtree and then LL rotation is performed on full tree, by full tree we mean the first node from the path of inserted node whose balance factor is other than -1, 0, or 1.

| State | Action |

|---|---|

| A node B has been inserted into the right subtree of A the left subtree of C, because of which C has become an unbalanced node having balance factor 2. This case is L R rotation where: Inserted node is in the right subtree of left subtree of C |

| As LR rotation = RR + LL rotation, hence RR (anticlockwise) on subtree rooted at A is performed first. By doing RR rotation, node A, has become the left subtree of B. |

| After performing RR rotation, node C is still unbalanced, i.e., having balance factor 2, as inserted node A is in the left of left of C |

| Now we perform LL clockwise rotation on full tree, i.e. on node C. node C has now become the right subtree of node B, A is left subtree of B |

| Balance factor of each node is now either -1, 0, or 1, i.e. BST is balanced now. |

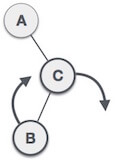

RL Rotation

As already discussed, that double rotations are bit tougher than single rotation which has already explained above. R L rotation = LL rotation + RR rotation, i.e., first LL rotation is performed on subtree and then RR rotation is performed on full tree, by full tree we mean the first node from the path of inserted node whose balance factor is other than -1, 0, or 1.

| State | Action |

|---|---|

| A node B has been inserted into the left subtree of C the right subtree of A, because of which A has become an unbalanced node having balance factor – 2. This case is RL rotation where: Inserted node is in the left subtree of right subtree of A |

| As RL rotation = LL rotation + RR rotation, hence, LL (clockwise) on subtree rooted at C is performed first. By doing RR rotation, node C has become the right subtree of B. |

| After performing LL rotation, node A is still unbalanced, i.e. having balance factor -2, which is because of the right-subtree of the right-subtree node A. |

| Now we perform RR rotation (anticlockwise rotation) on full tree, i.e. on node A. node C has now become the right subtree of node B, and node A has become the left subtree of B. |

| Balance factor of each node is now either -1, 0, or 1, i.e., BST is balanced now. |

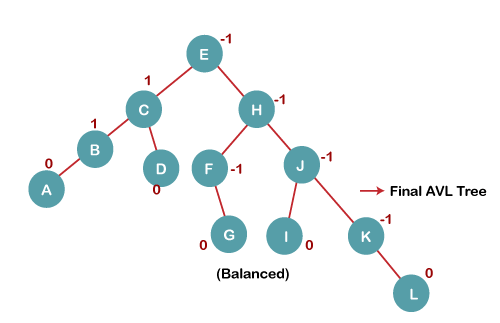

Construct an AVL tree having the Following Elements

H, I, J, B, A, E, C, F, D, G, K, L

To construct a balanced AVL tree for the given elements “H, I, J, B, A, E, C, F, D, G, K, L”, we can follow the steps below:

1. Insert H, I, J

On inserting the above elements, especially in the case of H, the BST becomes unbalanced as the Balance Factor of H is -2. Since the BST is right-skewed, we will perform RR Rotation on node H.

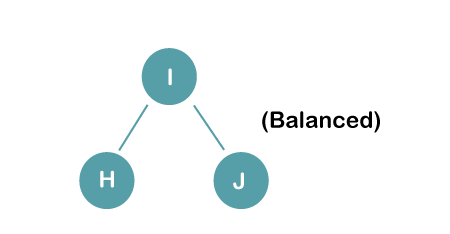

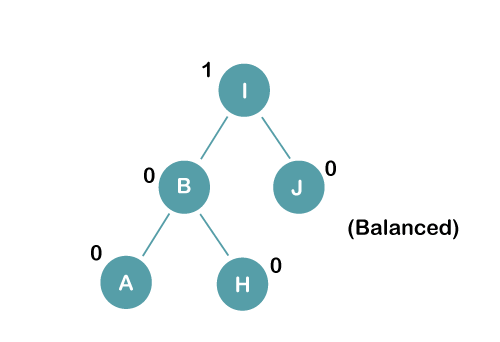

The resultant balance tree is:

2. Insert B, A

On inserting the above elements, especially in case of A, the BST becomes unbalanced as the Balance Factor of H and I is 2, we consider the first node from the last inserted node i.e. H. Since the BST from H is left-skewed, we will perform LL Rotation on node H.

The resultant balance tree is:

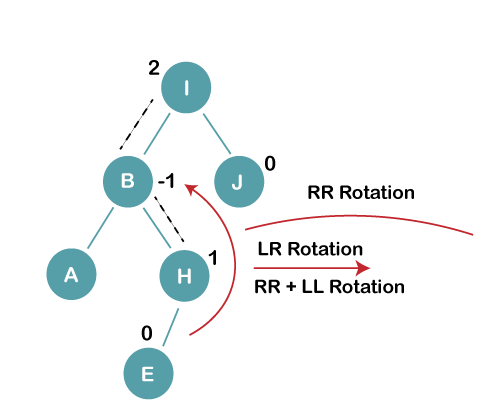

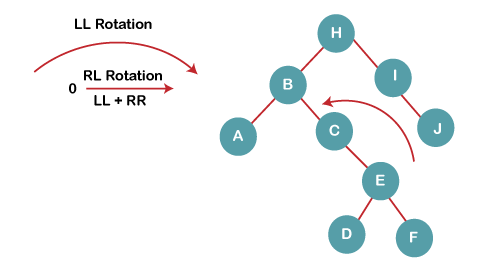

3. Insert E

On inserting E, BST becomes unbalanced as the Balance Factor of I is 2, since if we travel from E to I we find that it is inserted in the left subtree of right subtree of I, we will perform LR Rotation on node I. LR = RR + LL rotation

3 a) We first perform RR rotation on node B

The resultant tree after RR rotation is:

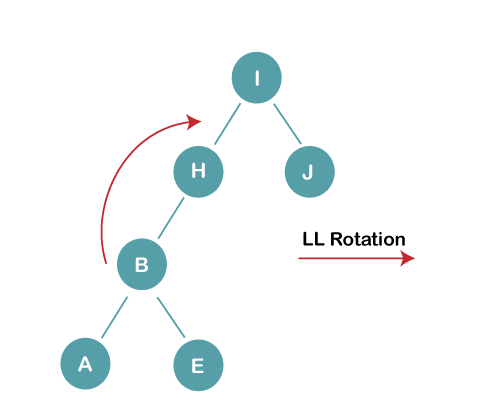

3b) We first perform LL rotation on the node I

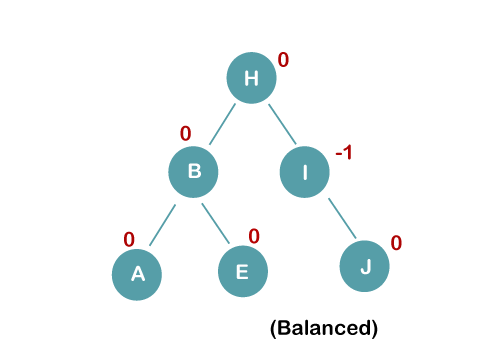

The resultant balanced tree after LL rotation is:

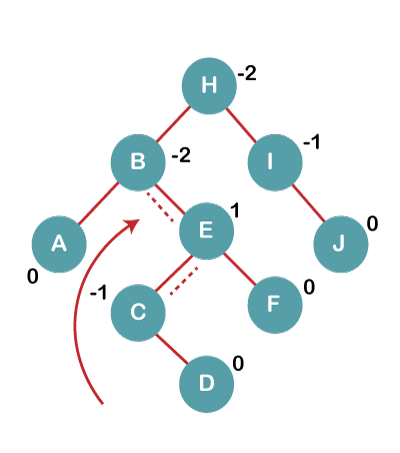

4. Insert C, F, D

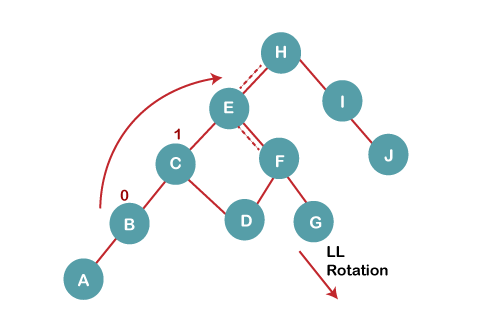

On inserting C, F, D, BST becomes unbalanced as the Balance Factor of B and H is -2, since if we travel from D to B we find that it is inserted in the right subtree of left subtree of B, we will perform RL Rotation on node I. RL = LL + RR rotation.

4a) We first perform LL rotation on node E

The resultant tree after LL rotation is:

4b) We then perform RR rotation on node B

The resultant balanced tree after RR rotation is:

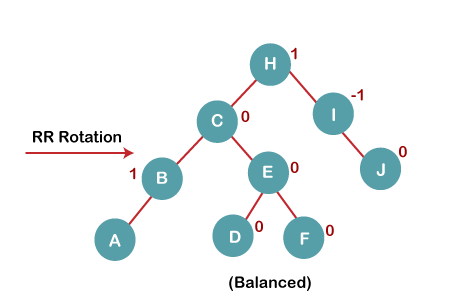

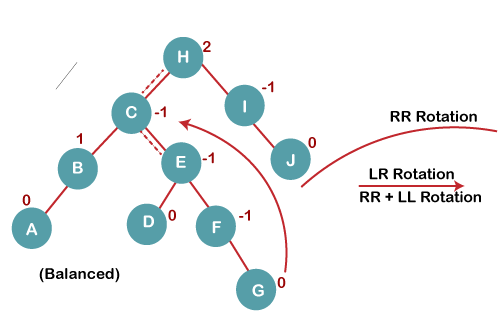

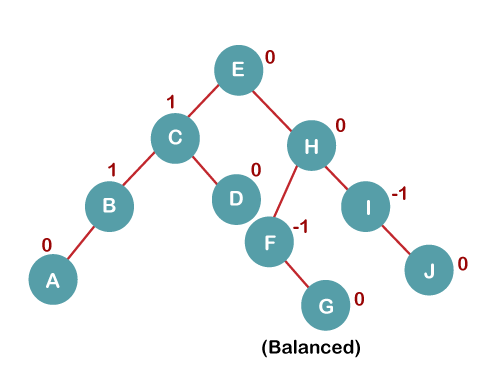

5. Insert G

On inserting G, BST become unbalanced as the Balance Factor of H is 2, since if we travel from G to H, we find that it is inserted in the left subtree of right subtree of H, we will perform LR Rotation on node I. LR = RR + LL rotation.

5 a) We first perform RR rotation on node C

The resultant tree after RR rotation is:

5 b) We then perform LL rotation on node H

The resultant balanced tree after LL rotation is:

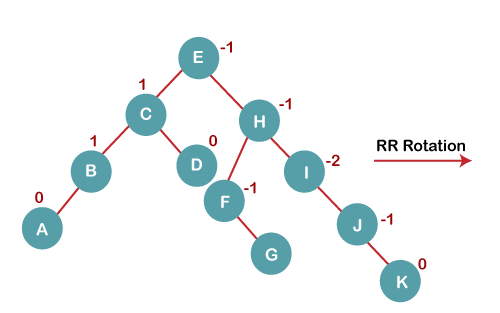

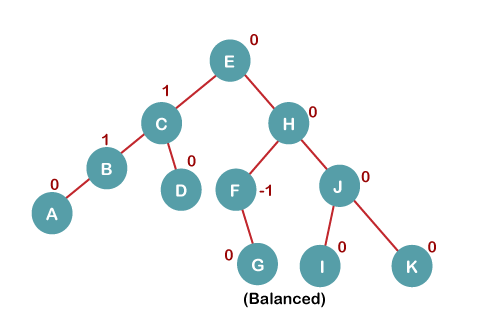

6. Insert K

On inserting K, BST becomes unbalanced as the Balance Factor of I is -2. Since the BST is right-skewed from I to K, hence we will perform RR Rotation on the node I.

The resultant balanced tree after RR rotation is:

7. Insert L

On inserting the L tree is still balanced as the Balance Factor of each node is now either, -1, 0, +1. Hence the tree is a Balanced AVL tree

A Basic C++ Program for an AVL Tree

Here’s the complete code for an AVL tree implementation in C++:

#include <iostream>

#include <algorithm>

using namespace std;

class AVL {

private:

struct Node {

int data;

Node* left;

Node* right;

int height;

};

Node* root;

int height(Node* node) {

if (node == nullptr) {

return -1;

} else {

return node->height;

}

}

int balanceFactor(Node* node) {

return height(node->left) - height(node->right);

}

void updateHeight(Node* node) {

node->height = max(height(node->left), height(node->right)) + 1;

}

Node* rotateRight(Node* node) {

Node* newRoot = node->left;

node->left = newRoot->right;

newRoot->right = node;

updateHeight(node);

updateHeight(newRoot);

return newRoot;

}

Node* rotateLeft(Node* node) {

Node* newRoot = node->right;

node->right = newRoot->left;

newRoot->left = node;

updateHeight(node);

updateHeight(newRoot);

return newRoot;

}

Node* balance(Node* node) {

updateHeight(node);

if (balanceFactor(node) == 2) {

if (balanceFactor(node->left) == -1) {

node->left = rotateLeft(node->left);

}

return rotateRight(node);

} else if (balanceFactor(node) == -2) {

if (balanceFactor(node->right) == 1) {

node->right = rotateRight(node->right);

}

return rotateLeft(node);

}

return node;

}

Node* insert(Node* node, int data) {

if (node == nullptr) {

Node* newNode = new Node();

newNode->data = data;

newNode->left = nullptr;

newNode->right = nullptr;

newNode->height = 0;

return newNode;

}

if (data < node->data) {

node->left = insert(node->left, data);

} else {

node->right = insert(node->right, data);

}

return balance(node);

}

void printInOrder(Node* node) {

if (node == nullptr) {

return;

}

printInOrder(node->left);

cout << node->data << " ";

printInOrder(node->right);

}

public:

AVL() {

root = nullptr;

}

void insert(int data) {

root = insert(root, data);

}

void printInOrder() {

printInOrder(root);

}

};

int main() {

AVL avl;

avl.insert(5);

avl.insert(2);

avl.insert(1);

avl.insert(4);

avl.insert(9);

avl.insert(8);

avl.insert(10);

avl.printInOrder(); // Output: 1 2 4 5 8 9 10

return 0;

}

This above program will output:

1 2 4 5 8 9 10This code defines an AVL class with private member functions for handling AVL tree operations like balancing, insertion, and printing the tree in order. The main function initializes an AVL object, inserts some data into the tree, and then prints the tree in order. Note that this is a very basic implementation, and you can modify it to add more functionality or improve its performance.

We hope this article helped you to understand AVL Trees in a very detailed way.

Online Web Tutor invites you to try Skillshike! Learn CakePHP, Laravel, CodeIgniter, Node Js, MySQL, Authentication, RESTful Web Services, etc into a depth level. Master the Coding Skills to Become an Expert in PHP Web Development. So, Search your favourite course and enroll now.

If you liked this article, then please subscribe to our YouTube Channel for PHP & it’s framework, WordPress, Node Js video tutorials. You can also find us on Twitter and Facebook.